Operational Medicine AI

AI in Military Medicine: What Leaders Must Know

Why this matters

The United States military has always been an early adopter of technology that promises operational advantage. Night vision. GPS. Unmanned aerial systems.

Recommended next step

Pair this article with the free guide or course store if you want a more structured framework you can apply at the bedside or in leadership conversations.

What this article covers

Author and clinical perspective

Chester "Chet" Shermer, MD, FACEP

Founder, Global MedOps Command

Dr. Chet Shermer leads Global MedOps Command to help emergency physicians, EMS teams, and operational medical leaders strengthen clinical judgment, adopt AI responsibly, and train for high-stakes decisions.

The United States military has always been an early adopter of technology that promises operational advantage. Night vision. GPS. Unmanned aerial systems. In each case, the technology moved faster than the doctrine, and the gap between capability and training produced casualties before it produced competency. Artificial intelligence in military medicine is following the same pattern.

The difference this time is that the stakes are clinical as well as operational. AI tools that affect triage decisions, diagnostic accuracy, treatment recommendations, and medevac prioritization are not merely tactical systems. They are medical interventions. And the military medical leaders — flight surgeons, battalion surgeons, MEDEVAC medical directors, unit medical commanders — who do not understand their capabilities and failure modes will make worse decisions because of them, not better ones.

This is written from the perspective of a military physician who has spent over two decades navigating the intersection of operational medicine and rapidly evolving technology. The intent is not to generate enthusiasm for AI in military medicine. There is enough of that. The intent is to generate informed, critical engagement.

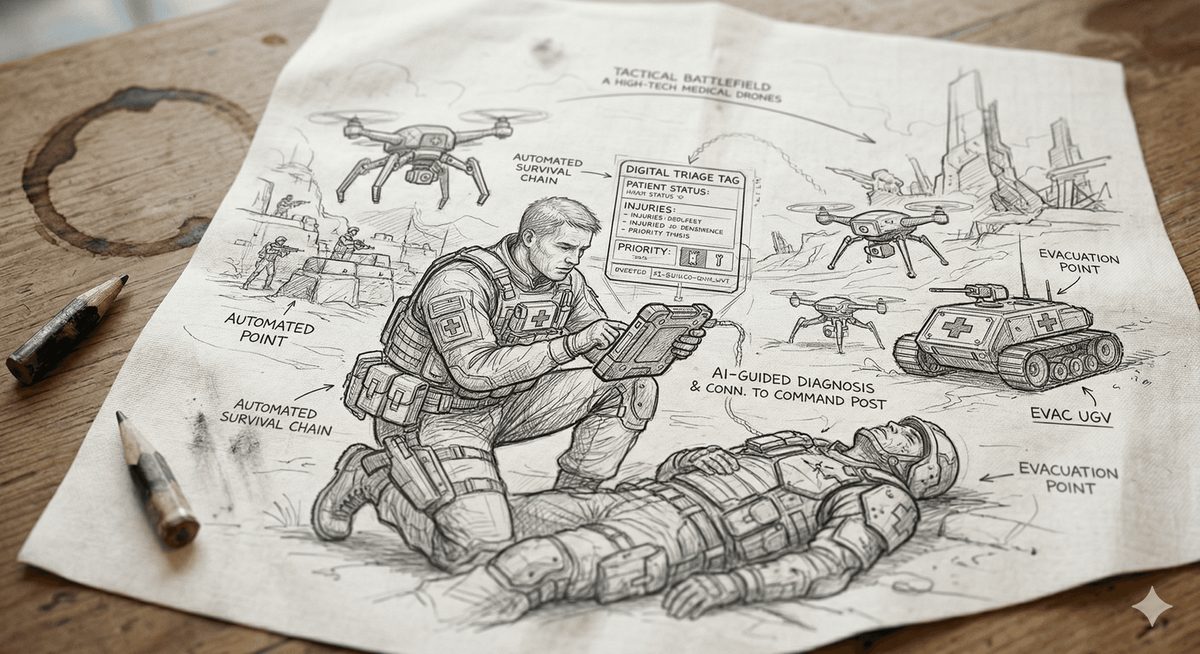

WHERE AI IS ALREADY OPERATIONAL IN MILITARY MEDICINE

The military AI footprint in medicine is broader than most practitioners at the unit level realize, because much of it is embedded in platforms and systems rather than presented as standalone AI tools.

Telemedicine platforms with AI-assisted clinical decision support are deployed across multiple military medical contexts. The ability to transmit patient data — vital signs, images, ECG tracings — from the point of injury to a remote physician or specialty consultant has been a military capability for years. The AI layer being added to these platforms performs triage of incoming data streams, flags physiologic deterioration, and in some implementations generates preliminary diagnostic assessments that the remote physician reviews. The remote physician remains the decision authority. But the information they receive has already been processed, filtered, and prioritized by an algorithm.

TCCC-compliant wearable biosensors with continuous physiologic monitoring are in various stages of fielding and evaluation. The operational vision — continuous real-time physiologic data from every casualty in a mass casualty event, transmitted to the medical commander's dashboard — represents a genuine capability advance for the MASCAL environment. The practical challenges of sensor placement in a tactical environment, battery life, data transmission under communications degradation, and the cognitive load of managing a real-time multi-patient data stream on the medical commander are all active problems.

Medical logistics AI — predictive modeling for blood product consumption, surgical supply demand, and pharmacy inventory in deployed settings — is operationally active in theater medical support planning. This is perhaps the least clinically visible application but has significant downstream impact on the resources available at the point of care.

Radiology AI in deployed settings is an active area of development. The ability to provide AI-assisted interpretation of digital X-ray and portable CT imaging at Role 2 and Role 3 facilities, in environments where radiologist support may be limited or delayed, addresses a genuine capability gap. The validation questions — performance on blast injury patterns, performance on patient populations from different geographic and demographic backgrounds than the training datasets — are not fully answered.

Do not stop at awareness

Turn this article into a concrete next step while the issue is still fresh.

If this problem already affects your documentation, workflow, or leadership conversations, move next into the guide, course, or related resource instead of leaving the insight at article level.

THE DOCTRINE GAP: WHERE THE MILITARY IS BEHIND

Military medical doctrine has not kept pace with AI capability development, and the gap is consequential. The questions that need doctrinal answers are not being answered at the unit level, because unit-level medical commanders don't have the authority or the institutional support to generate them. They need to come from the medical command structure.

What is the command authority framework for AI-assisted triage in a MASCAL event? If an AI system recommends a different triage category than the trained medic's assessment, who decides — and on what basis? This is not a hypothetical. It will happen. The time to establish that command framework is in training, not on the objective.

What are the rules of engagement for AI-generated treatment recommendations in a degraded communications environment? If a medic is operating without access to a remote physician and an AI clinical decision support tool recommends an intervention outside their standard scope, what is the authorization framework? The medical commander who has not thought through this problem in advance will face it under the worst possible conditions.

What is the data security framework for AI-enabled medical systems in a contested electromagnetic environment? Patient medical data transmitted to AI cloud processing infrastructure represents an intelligence vulnerability. The enemy's ability to intercept and exploit medical data from the battlefield is not a theoretical concern. Military AI medical systems need adversarial security evaluation alongside clinical validation.

These are medical command problems, not technology problems. They require physician leadership to define, and they require that physician leadership to have sufficient AI literacy to engage with them substantively.

TRAINING THE FORCE FOR AI-INTEGRATED MEDICAL OPERATIONS

The medic who has never operated with AI-assisted clinical decision support and encounters it for the first time in a tactical environment is not equipped to use it effectively. The medic who has been trained to treat AI outputs as authoritative rather than advisory is potentially more dangerous than one with no AI tools at all.

Military medical training for AI integration needs to address three competencies that current training pipelines are not systematically developing. The first is basic AI literacy — what kind of tool is this, what was it trained on, what does its output mean, and what are its known failure modes. The second is override criteria — the prospective threshold-setting that defines when the medic or physician acts against the algorithm's recommendation, and on what clinical basis. The third is degraded operations — how does the clinical workflow change when AI-assisted tools are unavailable, whether from battery failure, communications degradation, system compromise, or simply moving outside the tool's validated operating parameters.

A force that can operate effectively with AI tools and without them is more capable than one optimized for either condition alone. That is the training standard military medicine should be building toward.

THE FLIGHT SURGEON'S POSITION

Flight surgeons occupy a unique position in the military AI medicine conversation. They bridge aviation safety culture — which has decades of experience integrating automated systems into high-stakes human performance environments — and military medicine's clinical accountability framework. Aviation's approach to automation, crew resource management, and defined override authority for pilots facing automated system failures is directly applicable to the military medical AI context.

The flight surgeon who understands both domains is positioned to contribute meaningfully to how military medicine develops its AI doctrine, training standards, and command frameworks. That contribution requires active engagement with the AI transition, not observation from the sideline.

---DR. CHET'S TAKE---

I have served as a Medical Corps officer long enough to have watched multiple technology transitions move faster than the doctrine written to govern them. Every time, the gap between capability and doctrine was filled by the judgment of individual medical officers in the field — which is sometimes a strength and always a risk.

AI in military medicine is the most consequential technology transition I have seen in my career, because it operates directly on clinical decision-making at the point of injury. The medic on the objective, the flight surgeon at the Role 2, the medical commander at the MASCAL scene — they are all going to be using AI-augmented tools in the next conflict. The question is whether they will have been trained to use them with the same discipline and command awareness they bring to every other aspect of their medical mission.

In my view, the military medicine community needs to lead this conversation, not follow it. The operational and clinical stakes are too high to wait for doctrine to catch up. Medical commanders need to be developing unit-level AI governance frameworks now — defining override criteria, establishing training standards, and building the institutional culture of critical AI engagement that will protect their soldiers and their own command authority.

That work starts with individual medical officers who are willing to engage seriously with what AI can and cannot do. It starts with the decision to become genuinely informed rather than passively equipped.

— Dr. Chester "Chet" Shermer, MD, FACEP is a Professor of Emergency Medicine, Medical Director for Air Medical and Critical Care Transport programs, and a military medical officer with the Army National Guard. He is the founder of Global MedOps Command and the creator of AI in Emergency Medicine: Becoming AI Bulletproof.

AI Won't Wait. Neither Should You.

The doctrine gap described in this post is real, it is widening, and it is creating risk for military medical commanders and the soldiers they serve. Medical officers who build AI fluency now — who understand how these tools work, where they fail, and how to establish command authority over AI-assisted medical decisions — will lead more capable, more resilient medical operations than those who engage with this transition reactively.

Consider enrolling in my course: AI in Emergency Medicine: Becoming AI Bulletproof — a physician-built course covering AI clinical decision support, documentation risk, and the command frameworks designed for the high-stakes, high-tempo environments where military and emergency medicine intersect.

Learn more: AI in Emergency Medicine: Becoming AI Bulletproof

Author and expertise

Chester "Chet" Shermer, MD, FACEP

Founder, Global MedOps Command

Dr. Chet Shermer leads Global MedOps Command to help emergency physicians, EMS teams, and operational medical leaders strengthen clinical judgment, adopt AI responsibly, and train for high-stakes decisions.

Through courses, simulation platforms, books, and practical resources, he translates frontline emergency medicine, transport, and military leadership experience into tools clinicians can use immediately.

This article is published through Global MedOps Command to help emergency clinicians evaluate AI, workflow, and operational decisions with a physician-led perspective.

View the full author hubClinical application depth

Operational AI is only valuable when it changes a real decision path inside the department or agency.

Prediction without a linked operational response usually becomes dashboard theater. The useful version of this topic is the one that changes staffing, escalation, throughput, readiness, or after-action review in a way your team can audit later.

Operational application checklist

Leadership questions to answer next

Build the next step from this article

Strengthen topical depth, related reading, and the right conversion path.

Keep readers inside the same topic cluster with related articles, then channel them toward the guide, course, books, simulation, or contact path that best matches the problem this article surfaced.

Simulation

Move the lesson into readiness reps

Use MilMedSim when this article points toward TCCC rehearsal, operational decision-making, or military medical readiness training.

Explore MilMedSimCourse

Build a physician-led framework

Use the course page when this topic is pushing you toward structured AI training, leadership implementation, and safer decision support adoption.

See the AI course detailsConsulting

Talk through a department-specific problem

Use the contact pathway if you need speaking, advisory, or workflow support around the issue raised in this article.

Request speaking or consultingRelated reading inside Global MedOps Command

Operational Medicine AI

AI-Assisted Decision Support in MCI and EMS Operations

Mass casualty incidents expose every weakness in the emergency medical system simultaneously. Communication degrades. Resources are overwhelmed. Triage dec

Read related articleAI in Emergency Medicine

AI Literacy in Emergency Medicine: What You Need to Know

Emergency physicians using AI tools without understa

Read related articleAI in Emergency Medicine

AI in the ED: What Physicians Actually Need to Know

The hype cycle surrounding artificial intelligence in medicine has achieved a zenith. Vendors promise everything from autonomous diagnosis to zero-miss sep

Read related article